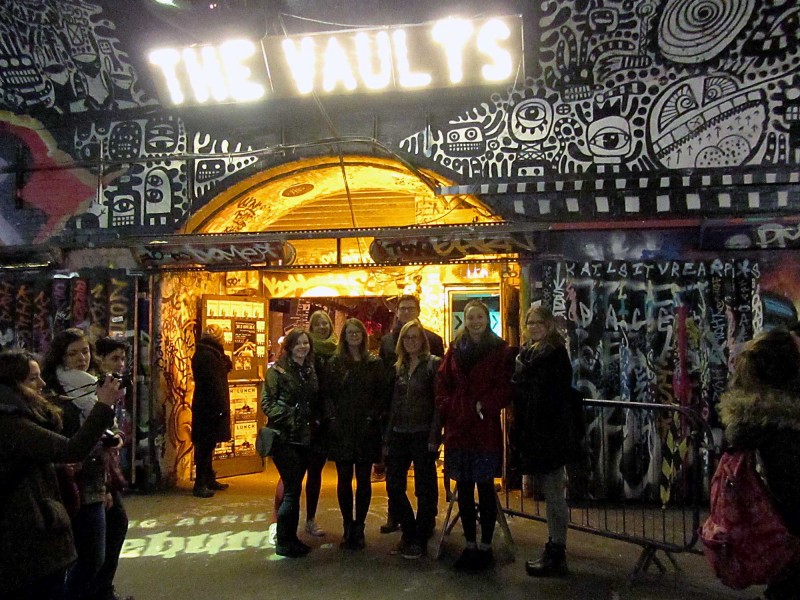

As part of the 2018 Vault festival, we slunk through the tunnels under Waterloo station to catch AI Love You, an interactive play that explores morality, consciousness and relationships. Michael sets the scene.

AI Love You at Vault Festival

As we filtered into the small theatre, the actors were seated, scanning us nervously. One male, one female; two ‘ordinary’ twenty-somethings.

Mysteriously, we were handed small blank sheets of paper as we took our seats. Except they weren’t all blank, our colleague Anna had a black cross on hers. We thought little of it at the time.

The characters introduced themselves: Adam and April. They’d be a happy couple but there’s something wrong. The dynamic is skewed. It soon becomes clear that April is terminally ill and wants the right to end her own life with dignity before her condition worsens. Adam disagrees, he wants to nurse her; he doesn’t care about her condition he just wants to be with her for every moment he can.

The Cog team, outside The Vaults in Waterloo

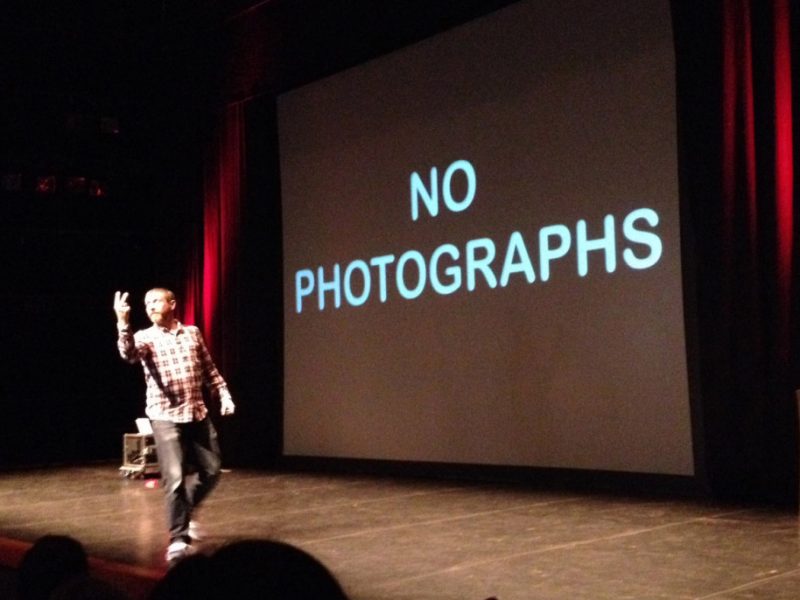

A klaxon bellows followed by an officious announcement.

A member of the Creative Biolife corporation explains that we are sitting in on a live experiment. A hearing in which we are to be the ‘ethical steering committee’. We are asked to vote on whether we agree with Adam or April at the end of their statements. Should we allow April to die or keep her alive for the sake of Adam?

We should vote using our sheets of paper – held up for April, down for Adam.

Then the announcer explains that one of us needs to be the Chairperson, to count the votes and report back on the results. The X has marked her spot, our (somewhat reluctant) Anna is the Chair.

Anna is announced as our Chair

The dilemma would usually be fairly clear-cut: surely April should be allowed to choose her own fate.

But things get complicated when it’s revealed that April isn’t human, she’s a first generation AI built to synthesise a relationship with Adam. Her illness is a degenerative coding bug. For now it’s just causing occasional glitches and memory loss but it’ll get worse and she can’t stand what that will do to him.

April appeals to the audience. She can no longer fulfil her primary function of serving her partner/owner and therefore needs to be euthanised (or shut down and wiped perhaps). This throws up some interesting moral ambiguity.

If April’s primary function was to please Adam then ending her life feels like the antithesis of that function.

Does April have the capacity for pain and, if so, is her physical pain more real than Adam’s emotional trauma?

Does a human’s needs always outstrip those of a machine? Even a sentient one?

Is she sentient or is it all programming? Are human emotions valid or are they all just biological programming? If so, should future AI’s be afforded equal rights to humans?

The Cog team, queuing in the the corridor before the show

April and Adam make their case and we vote, Anna has to stand and count the ups and downs of our sheets of paper, and announce the results. It’s a fairly even split.

To add to the moral confusion, Adam tells us that when April is shut down, her image will be used for Biolife’s ad campaign, and she’ll be re-programmed and sold-off to another customer. The prospect of potentially seeing the former love of his life with another partner was, Adam professed, too much to bear.

But if she was reprogrammed then she would be a different ‘woman’. So is he in love with the image of April? Or is his love with the idea of her, or the feelings and memories she evokes in him?

The impassioned arguments went back and forth, and we were asked to vote several times. Interestingly, by now, our audience was tipping in April’s favour. The instinct to ease physical pain (even simulated pain) above the emotional trauma of another is, apparently, a very human one despite the unusual circumstances.

Near the end, each protagonist was asked to leave whilst the other made their final appeal. April made a strong case that, despite what she says in front of Adam, her argument was all about protecting him. He had become isolated from his friends, focused on her, a less fulfilled human being with her than without her. This was, she told us, a selfless act; she wanted to save him from what he became when he was with her.

So was her ‘condition’ imagined? Had she invented it in order to save him? Was she in fact fulfilling her primary function of protecting him? Might this be the predetermined end to all human/AI relationships? Was it even a satire on all long term human relationships?

A paper-weight from Adam’s memory-box, a reminder of a special day out with April

Their time for arguments was over and the audience were invited to ask questions. Rather sweetly, most of our questions were about love. Do you love her? Do you think he loves you? The actors did a great job of handling the improvisation, in character.

And then the actors froze and we were invited to debate the topics between us. Anna, our Chair had to stand at the front and lead the debate (which she did brilliantly). There were some insightful and profound comments from amongst us, it was clear that the play had forced us all to consider the implications before us.

And then the time for debate was over. The voice of the Creative Biolife corporation asked us to make one last vote and we passed our judgement.

I won’t spoil the ending. I guess the verdict can change each night. And apparently so do the actors – April (Eve Ponsonby) and Adam (Peter Dewhurst) swap roles, between AI and human, each evening.

AI Love You is poignant and timely. For the first time we can glimpse a future where human-like AI machines could be our constant companion. Perhaps it won’t be long until we’re forced to have these discussions outside of the theatre.

Related links:

AI Love you was a Heart to Heart Theatre production

Read more about The Vault Festival on their site

Illustration by Marianna Madriz for our Cultural Calendar.